Update Nov 27, 2014: Just posted this YouTube video that shows how to easily identify top SharePoint Performance Problems: SharePoint Performance Analysis in 15 Minutes

SharePoint is without question a fast-growing platform and Microsoft is making lots of money with it. It’s been around for almost a decade and grew from a small list and document management application into an application development platform on top of ASP.NET using its own API to manage content in the SharePoint Content Database.

Over the years many things have changed – but some haven’t – like – SharePoint still uses a single database table to store ALL items in any SharePoint List. And this brings me straight into the #1 problem I have seen when working with companies that implemented their own solution based on SharePoint.

The following blog shows my findings mainly using Dynatrace which you can also download and use for free on your environment.

#1: Iterating through SPList Items

As a developer I get access to a SPList object – either using it from my current SPContext or creating a SPList object to access a list identified by its name. SPList provides an Item’s property that returns a SPListItemCollection object. The following code snippet shows one way to display the Title column of the first 100 items in the current SPList object:

SPList activeList = SPContext.Current.List; for(int i=0;i<100 && i<activeList.Items.Count;i++) { SPListItem listItem = activeList.Items[i]; htmlWriter.Write(listItem["Title"]); }

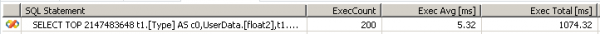

Looks good – right? Although the above code works fine and performs great in a local environment it is the number 1 performance problem I’ve seen in custom SharePoint implementations. The problem is the way the Items property is accessed. The Item’s property queries ALL items from the Content Database for the current SPList and “unfortunately” does that every time we access the Item’s property. The retrieved items ARE NOT CACHED. In the loop example we access the Item’s property twice for every loop iteration – once to retrieve the Count, and once to access the actual Item identified by its index. Analyzing the actual ADO.NET Database activity of that loop shows us the following interesting result:

Problem: The same SQL Statement is executed all over again which retrieves ALL items from the content database for this list. In my example above I had 200 SQL calls totaling up to more than 1s in SQL Execution Time.

Solution: The solution for that problem is rather easy but unfortunately still rarely used. Simply store the SPListItemCollection object returned by the Items property in a variable and use it in your loop:

SPListItemCollection items = SPContext.Current.List.Items; for(int i=0;i<100 && i<items.Count;i++) { SPListItem listItem = items[i]; htmlWriter.Write(listItem["Title"]); }

This queries the database only once and we work on an in-memory collection of all retrieved items.

#2: Requesting too much data from the content database

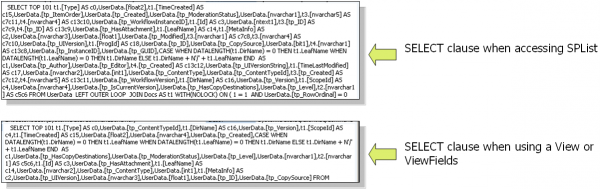

It is convenient to access data from the Content Database using the SPList object. But – every time we do so we end up requesting ALL items of the list. Look closer at the SQL Statement that is shown in the example above. It starts with SELECT TOP 2147483648 and returns all defined columns in the current SPList.

Most developers I worked with were not aware that there is an easy option to only query the data that you really need using the SPQuery object. SPQuery allows you to:

a) limit the number of returned items

b) limit the number of returned columns

c) query specific items using CAML (Collaborative Markup Language)

Limit the number of returned items

If I only want to access the first 100 items in a list – or e.g.: page through items in steps of 100 elements (in case I implement data paging in my WebParts) I can do that by using the SPQuery RowLimit and ListItemCollectionPosition property. Check out Page through SharePoint Lists for a full example:

SPQuery query = new SPQuery(); query.RowLimit = 100; // we want to retrieve 100 items query.ListItemCollectionPosition = prevItems.ListItemCollectionPosition; // starting at a previous position SPListItemCollection items = SPContext.Current.List.GetItems(query); // now iterate through the items collection

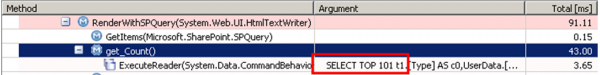

The following screenshot shows us that SharePoint actually takes the RowLimit count and uses it in the SELECT TOP clause to limit the number of rows returned. It also uses the ListItemCollectionPosition in the WHERE clause to only retrieve elements with an ID > previous position.

Limit the number of returned columns

If you only need certain columns from the List SPQuery.ViewFields can be used to specify which Columns to retrieve. By default – all columns are queried which causes extra stress on the database to retrieve the data, requires more network bandwidth to transfer the data from SQL Server to SharePoint, and consumes more memory in your ASP.NET Worker Process. Here is an example of how to use the ViewFields property to only retrieve the ID, Text Field and XZY Column:

SPQuery query = new SPQuery(); query.ViewFields = "<FieldRef Name='ID'/><FieldRef Name='Text Field'/><FieldRef Name='XYZ'/>";

Looking at the generated SQL makes the difference to the default query mode obvious:

Query specific elements using CAMLCAML allows you to be very specific about which elements you want to retrieve. The syntax is a bit “bloated” (that is my personal opinion) as it uses XML to define a SQL WHERE like clause. Here is an example of such a query:

SPQuery query = new SPQuery();

query.Query = “<Where><Eq><FieldRef Name=”ID”/><Value Type=”Number”>15</Value></Eq></Where>”;

As I said it is a bit “bloated” but it hey – it works 🙂

Problem: The main problem that I’ve seen is that developers usually go straight on and only work through SPList to retrieve list items resulting in too much data retrieved from the Content Database

Solution: Use the SPQuery object and its features to limit the number of elements and columns

#3: Memory Leaks with SPSite and SPWeb

In the very beginning I said “many things have changed – but some haven’t”. SharePoint still uses COM Components for some of its core features – a relict of “the ancient times”. While there is nothing wrong with COM, there is with memory management of COM Objects. SPSite and SPWeb objects are used by developers to gain access to the Content Database. What is not obvious is that these objects have to be explicitly disposed in order for the COM objects to be released from memory once no longer needed.

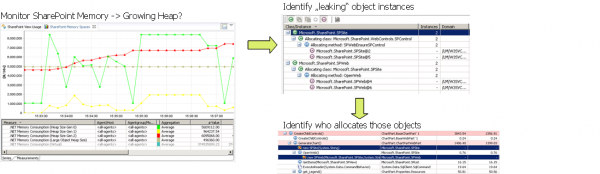

Problem: The problem SharePoint installations run into by not disposing SPSite and SPWeb objects is that the ASP.NET Worker Process is leaking memory (native and managed) and will end up being recycled by IIS in case we run out of memory. Recycling means losing all current user sessions and paying a performance penalty for those users that hit the worker process again after recycling is finished (first requests are slow during startup).

Solution: Monitor your memory usage to identify whether you have a memory leak or not. Use a memory profiler to identify which objects are leaking and what is creating them. In case of SPSite and SPWeb you should follow the Best Practices as described on MSDN. Microsoft also provides a tool to identify leaking SPSite and SPWeb objects called SPDisposeCheck.

The following screenshot shows the process of monitoring memory counters, using memory dumps and analyzing memory allocations using Dynatrace:

#4: Index Columns are not necessarily improving performance

When I did my SharePoint research during my first SharePoint engagements I discovered several “interesting” implementation details about SharePoint. Having only a single database table to store all List Items makes it a bit tricky to propagate index column definitions down to SQL Server. Why is that? If we look at the AllUserData table in your SharePoint Content Database we see that this table really contains columns for all possible columns that you can ever have in any SharePoint list. We find for instance 64 nvarchar, 16 ints, 12 floats, …

If you define an index on the first text column in your “My SharePoint List 1” and another index column on the 2nd number column in your “My SharePoint List 2” and so on and so on you would end up having database indices defined on pretty much every column in your Content Database.

Problem: Index Columns can speed up access to SharePoint Lists – but – due to the nature of the implementation of Indices in SharePoint we have the following limitations:

a) for every defined index SharePoint stores the index value for every list item in a separate table. Having a list with let’s say 10000 items means that we have 10000 rows in AllUserData and 10000 additional rows in the NameValuePair table (used for indexing)

b) queries only make use of the first index column on a table. Additional index columns are not used to speed up database access

Solution: Really think about your index columns. They definitely help in cases where you do lookups on text columns. Keep in mind the additional overhead of an index and that multiple indices don’t give you additional performance gain.

#5: SharePoint is not a relational database for high volume transactional processing

This problem should actually be #1 on my list and here is why: In the last 2 years I’ve run into several companies that made one big mistake: they thought SharePoint is the most flexible database on earth as they could define Lists on the fly – modifying them as they needed them without worrying about the underlying database schema or without worrying to update the database access logic after every schema change. Besides this belief these companies have something else in common: They had to rewrite their SharePoint application by replacing the Content Database in most parts of their applications with a “regular” relational database.

Problem: If you read through the previous 4 problem points it should be obvious why SharePoint is not a relational database and why it should not be used for high-volume transactional processing. Every data element is stored in a single table. Database indices are implemented using a second table that is then joined to the main table. Concurrent access to different lists is a problem because the data comes from the same table.

Solution: Before starting a SharePoint project really think about what data you have – how frequently you need it and how many different users modify it. If you have many data items that change frequently you should really consider using your own relational database. The great thing about SharePoint is that you are not bound to the Content Database. You can practically do whatever you want including access to any external database. Be smart and don’t end up rewriting your application after you’ve invested too much time already.

Final words

These findings are a summary of the work I did on SharePoint in the last 2 years. I’ve spoken at several conferences and worked with different companies helping them to speed up their SharePoint installations.

As SharePoint is built on the .NET platform you might also be interested in .NET monitoring.

Looking for answers?

Start a new discussion or ask for help in our Q&A forum.

Go to forum